Your RAG Passed Every Test and Failed Every User

Eval overfitting is real and underdiagnosed. Most RAG systems are built on a flat, static, insider-authored approximation of organizational knowledge. They don't have a model of the organization. They have documents about it.

Most engineers have a solid intuition about overfitting. Train a model too hard on your training data and it stops generalizing. Benchmark performance looks great. Production falls apart.

The same thing happens to RAG systems. Almost nobody talks about it.

What it looks like

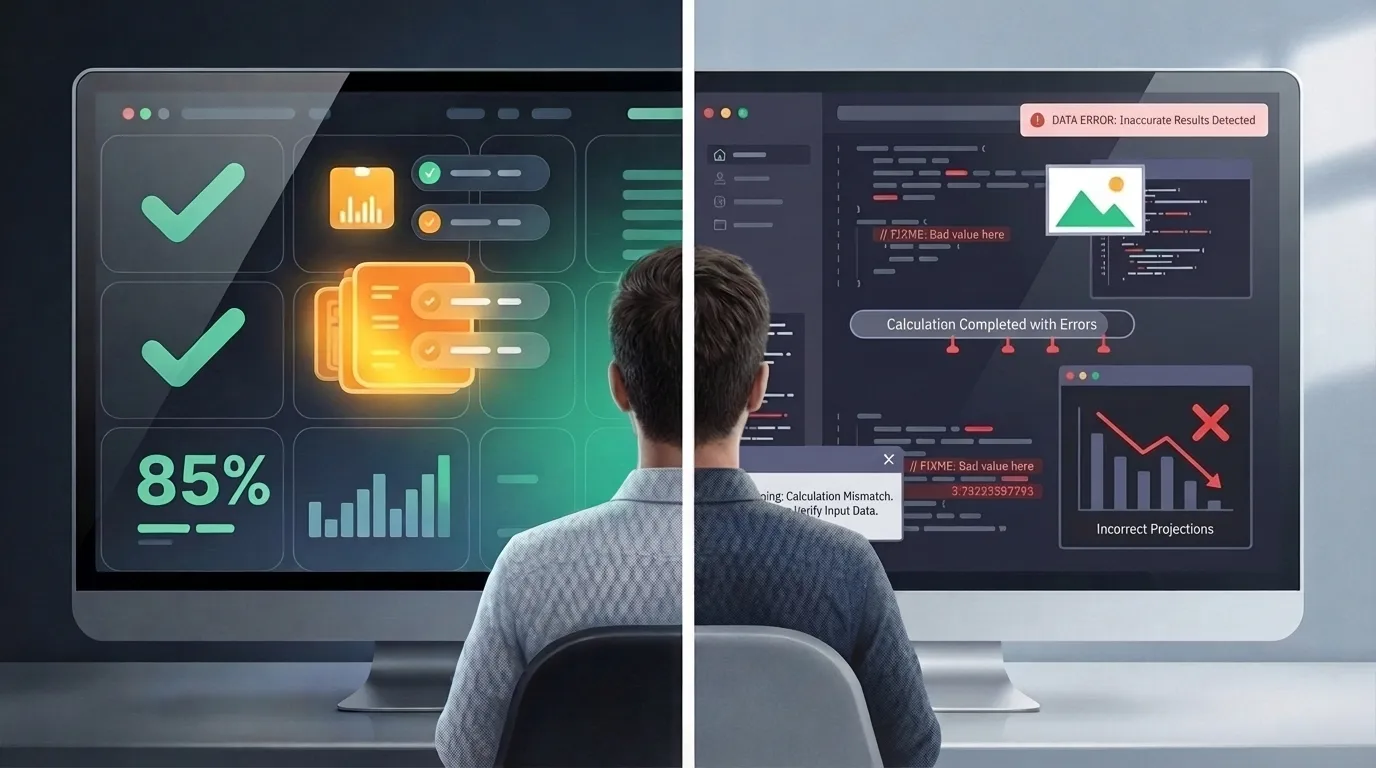

You build a golden dataset before launch. A set of questions your stakeholders care about, some red-teaming cases, a few persona-based queries. You run evals. You tune thresholds, prompts, retrieval parameters. Answer rate hits 85%. You ship.

Then real users show up. Answer rate drops to 52%.

Here is a concrete signal that something is wrong: take your golden dataset questions and reword them. Don't change the meaning, just change the phrasing. If your recall drops from 0.91 to 0.22 on the same questions asked differently, you don't have a system that understands your domain. You have a system that memorized the phrasing.

The golden dataset was written by insiders. Insiders ask questions in insider language. Real users don't. That gap is the problem.

Why it happens

Your golden dataset reflects your corpus. Your corpus was built by the same people who wrote your golden dataset. Website copy, internal docs, manually authored content written to steer how the LLM talks about the organization. All of it written by people who know how the company thinks about itself.

There is a Conway's Law version of this problem. Your corpus reflects the org structure of whoever built it. Internal teams write about what they own, in the language they use internally. The retrieval system learns that structure. Users don't share it.

It gets worse. RAG makes it trivially easy to embed bad data. Outdated runbooks, deprecated architecture docs, decisions that got reversed six months ago. All of it gets retrieved with the same confidence as something written yesterday. There is no freshness signal. The corpus doesn't know what it doesn't know.

So the retrieval system gets tuned on insider vocabulary. The embeddings learn to surface documents that match insider phrasing. Everything optimizes for the internal view of the organization.

An engineer asks "who do I talk to about the auth service going down?" The system was tuned on "what is the on-call rotation for identity infrastructure?" It misses. There is no structured model connecting those two questions to the same underlying reality.

Better retrieval doesn't fix this. A smarter search agent doing multi-hop queries will just confirm the absence faster.

The substrate problem

The corpus needs structure. Not more documents, structure. Explicit relationships between teams, systems, work, and outcomes. When those exist and are live, the answer to "who do I talk to about the auth service going down?" doesn't require a document with that exact phrasing. It's derivable from actual evidence: the repos, the incident history, the service ownership, the people who've touched it recently.

The answer stops being authored. It gets derived.

This is what SixDegree does. Rather than asking teams to maintain documentation that approximates organizational reality, SixDegree derives structured context directly from the systems engineers already use: GitHub, Jira, Argo, Vercel. The organizational model stays live because it's built from live data, not from wikis that are obsolete minutes after they're authored.

A wiki is not a knowledge base. It's a wasteland of good intentions.

When the substrate is structured and current, the eval problem changes too. You're no longer optimizing a system against the vocabulary of whoever wrote your golden dataset. You're operating on a model of what the organization actually is. Real production queries stop being surprising because the system understands the underlying relationships, not just the phrasing.

A few practical things

Separate the corpus from the eval. If the same people built both, you have a feedback loop that hides the problem until production.

Treat answer rate on real traffic as the only metric that matters. If your golden dataset shows 85% and real traffic shows 52%, the golden dataset is lying to you.

When you hit a content gap, ask whether writing more content is actually the fix. It works once. It doesn't scale, and it makes the insider vocabulary problem worse over time.

The short version

Eval overfitting is real and underdiagnosed. But it's a symptom. The root cause is that most RAG systems are built on a flat, static, insider-authored approximation of organizational knowledge. They don't have a model of the organization. They have documents about it.

A better eval framework won't fix that. A live, structured substrate will.

RAG hitting a wall?

We're helping teams move from document search to structured organizational context. Let's talk.